WHAT IS A BOT?

A bot is a software that performs automated tasks without human interference. They are used to imitate a human behaviour. Let’s say you are in a site, and would like to know some basic information about the product. The site owner can install a bot and feed the information that a first time visitor might need.

The bot picks up the keywords from your question and provides you with the answer you need. It is capable of answering multiple such questions at the same time. Where as assigning a human to do this work might cost you a lot of time and money. They are used to do tasks faster than humans.

Post Quick Links

Jump straight to the section of the post you want to read:

HOW TO DETECT GOOD AND BAD BOTS?

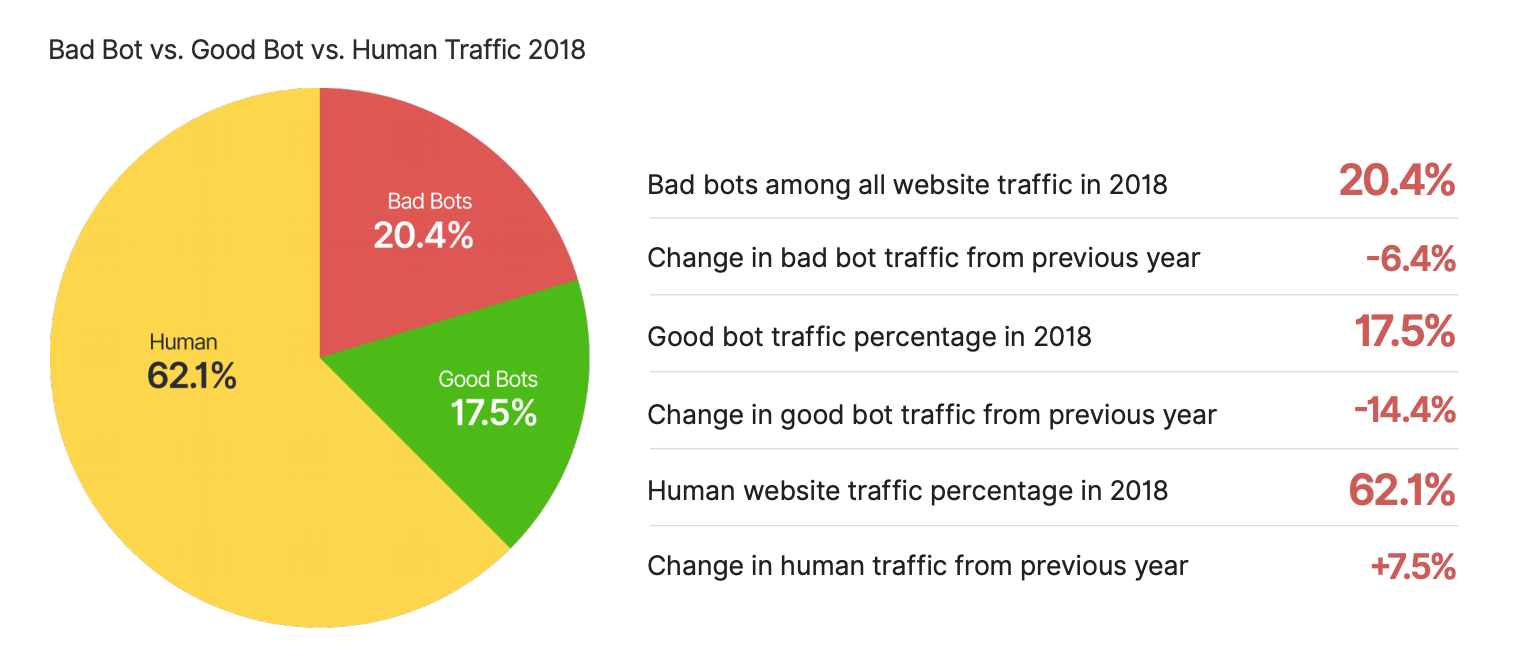

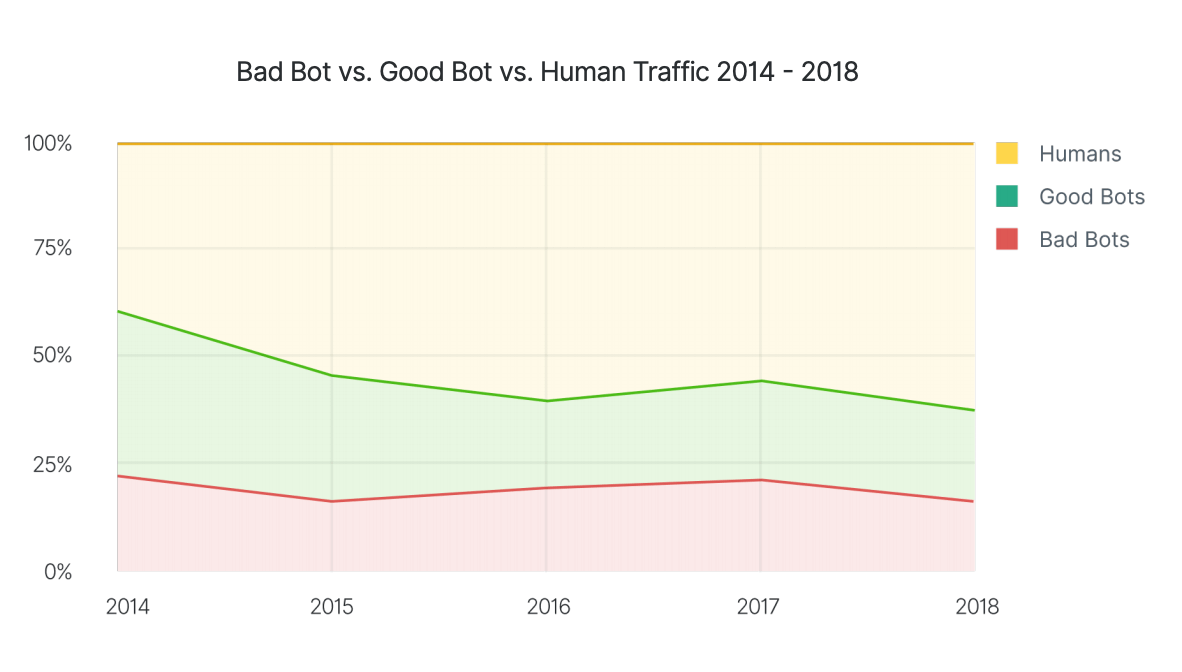

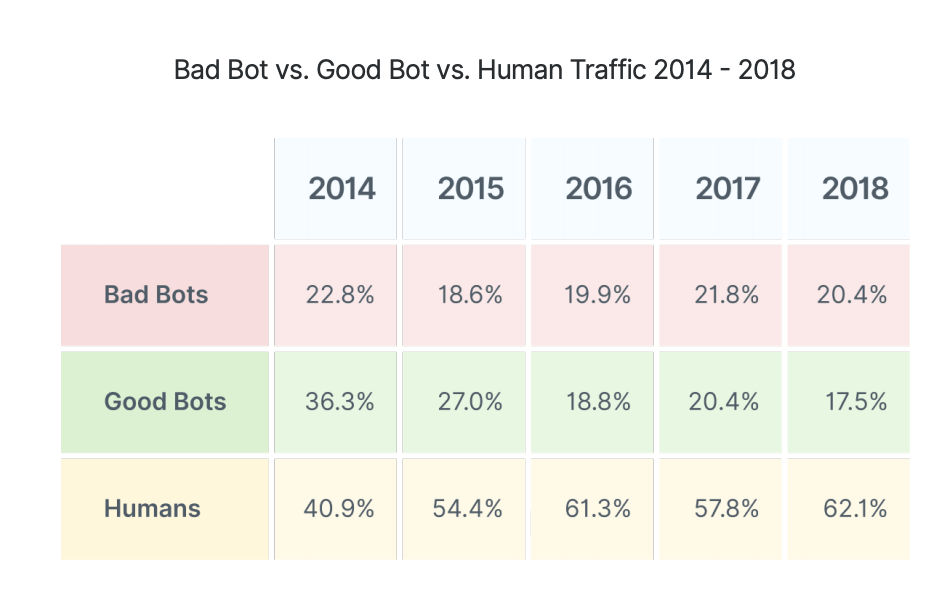

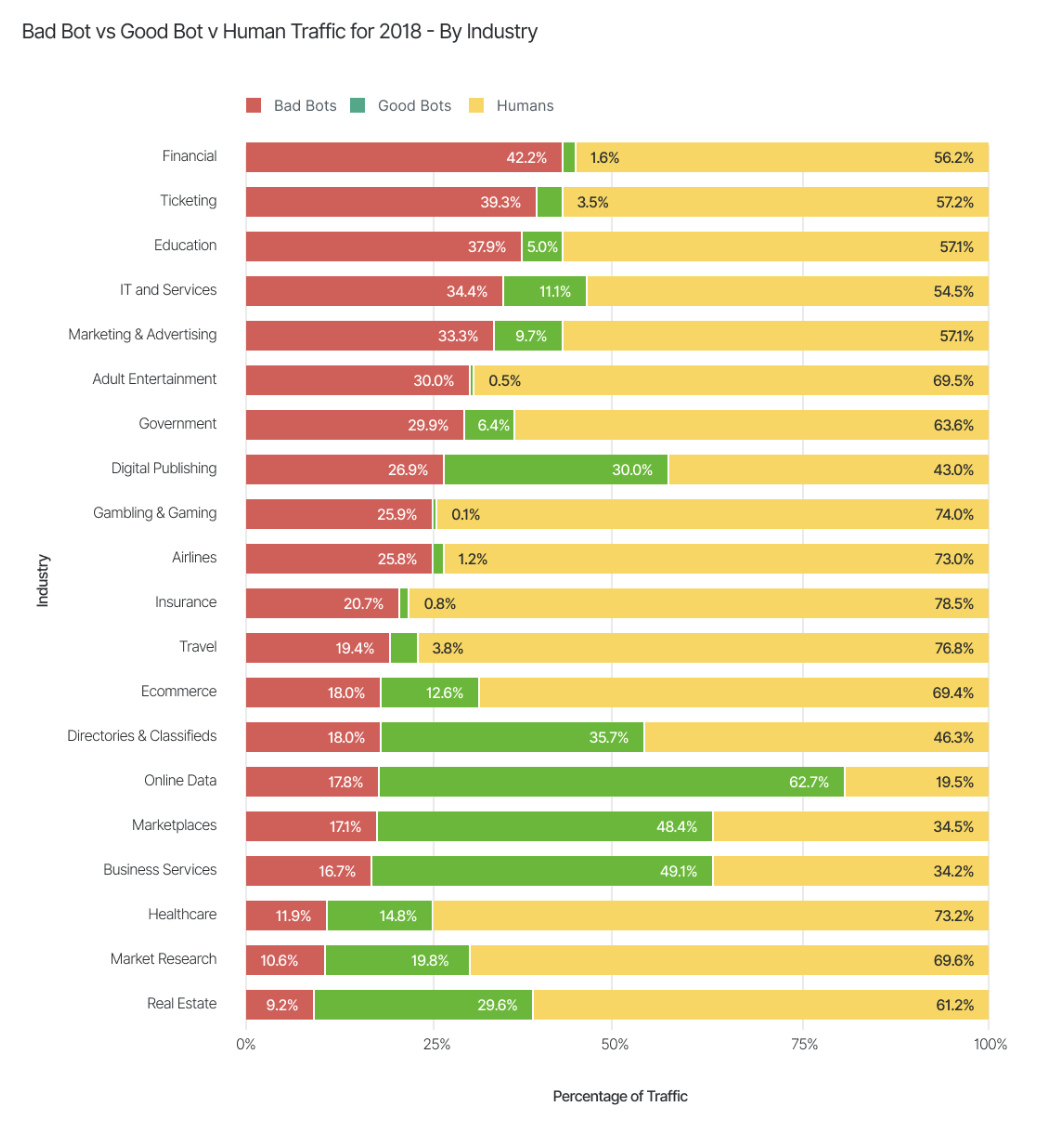

A bot is an internet robot. They occupy the largest place in the internet and are used to scan content, interact with users, find targets etc. While bots are used to make work easier there are some examples of bad bots. In 2018, 37.9% of the traffic weren't done by humans.

Good bots constitute to about 17.5% of the internet according to distill networks. They help in serving the customers better and making work easier for the organization

Servers generally follow rules to counteract bots. They implement a robots.txt file stating the bot’s behaviour. If a bot does not follow the rules then the server should deny access permission and be removed from the website. But if the only restriction is the file, and no other measures have been taken then there is no way to enforce these rules. If a bot does not follow these rules then it is called a bad bot.

Bad bots are used to perform DOS (Denial of Service attacks), steal sensitive information and perform miscellaneous activities. According to a survey done in 2018 by Distil Networks, one in 5 sites were accessed by bad bots, which sums up to roughly 20% of the internet.

These bots are organized in botnets and controlled by C&Cs (Command & Control Servers). This centralization makes it easy for a bot to take down.

Distill Networks quotes that, Based on the analysis of hundreds of billions of bad bot requests over 2018, simple bots, which are easy to detect and defend against, accounted for 26.4 percent of bad bot traffic. Meanwhile, 52.5 percent came from those considered to be "moderately" sophisticated, equipped with the capability to use headless browser software as well as JavaScript to conduct illicit activities.

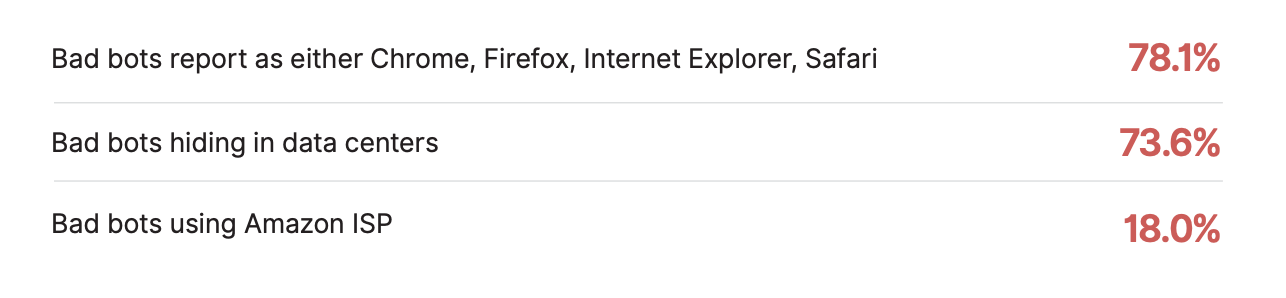

A total of 73.6 percent of bad bots are classified as Advanced Persistent Bots (APBs), which are able to cycle through random IP addresses, switch their digital identities, and mimic human behavior.

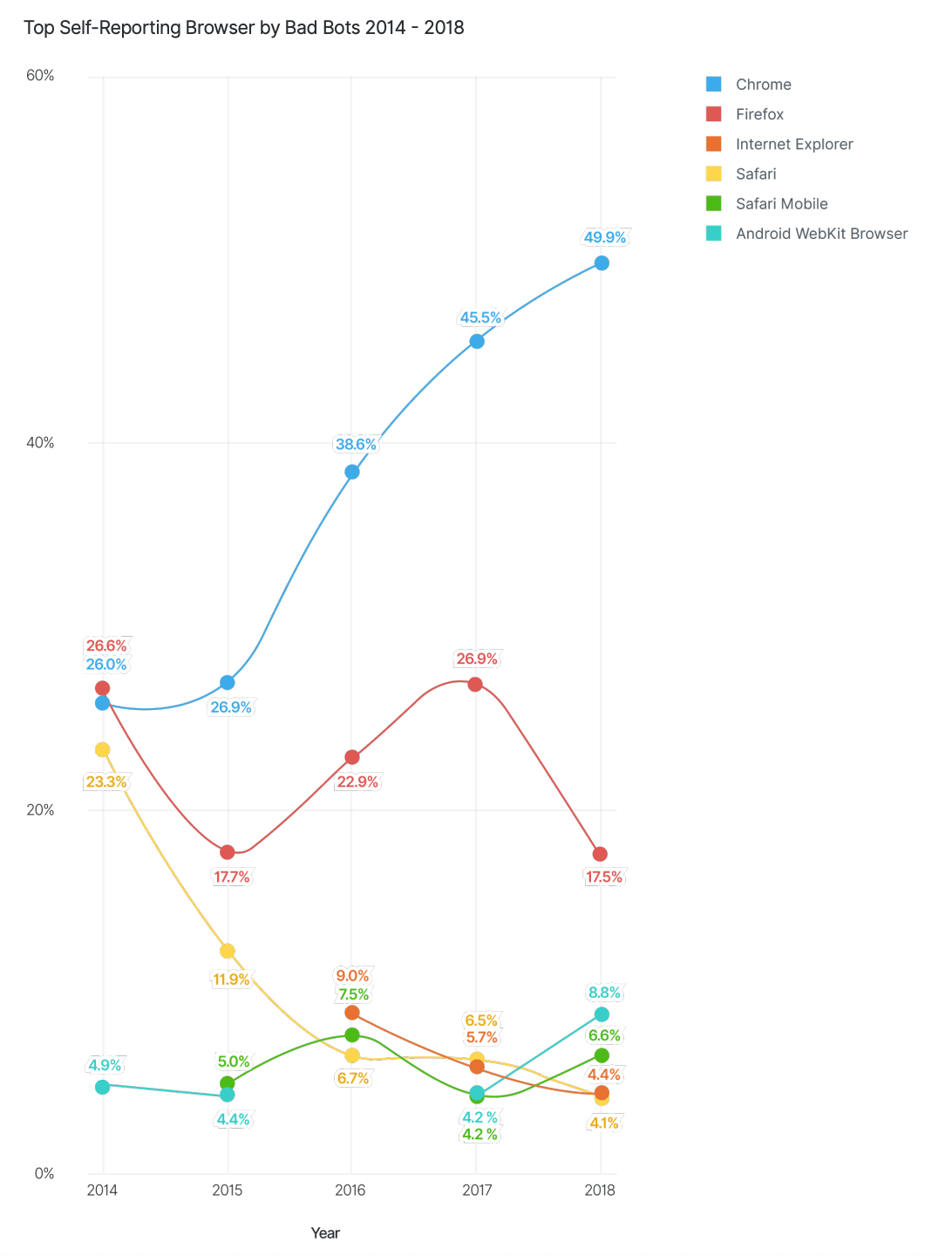

It is found that about 50 percentage of bad bots use Google Chrome and 73.6 percent of bad bot traffic are originated from Data Centers. Amazon recorded the maximum in bad bot trafficking origin with 20%.

BAD BOTS DETECTION IN DIFFERENT INDUSTRIES

Every industry is affected because of bad bots.

A few of them are:

1 . Airlines: Bots are mostly used here to scrape content such as flight information, pricing, and seat availability. Sometimes, fraudsters attempt to break in to a customer account and steal their loyalty points and stored credit card information.

2. Ecommerce: There are a lot of ecommerce sites coming up and each of these sites sell the same product. Therefore the competition is very high. They therefore use bad bots to scrape pricing information. Criminals also use these to get gift cards and wallet balances from existing users.

3. Ticketing: This is a big scam and there is a whole ecosystem out there who purchase tickets to events in bulk using bots and then sell them out to consumers for a higher price.

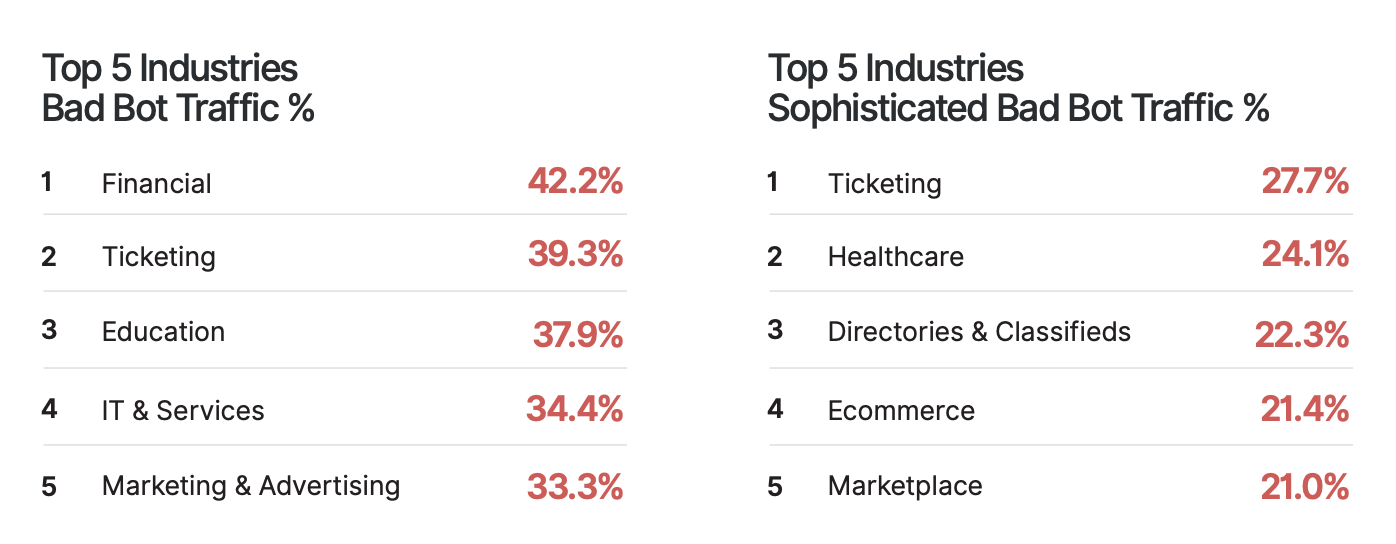

Here is a tabulation for top 5 industries that are affected the most by bad bot traffic.

DATA BREACHING USING BOTS

Data breach is a serious problem. According to thalesesecurity.com’s 2019 data threat report, about 97% of respondents are using sensitive data with digitally transformative technologies. Globally, 60% say they have been breached at some point in their history, with 30% experiencing a breach within the past year alone. In the U.S. the numbers are even higher, with 65% ever experiencing a breach, and 36% within the past year.

Customer’s data and stolen credentials is a serious problem for any bot business that collects payment details. Every data breach opens endless possibilities of credentials and simultaneously increases the bot problem.

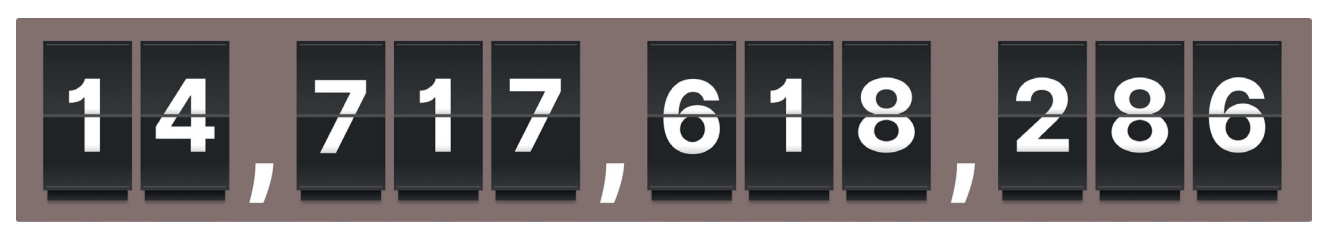

This is the amount of data breached in 2013. And, with more access to bad bots, the problem keeps increasing.

Source - Distil Networks 2019 report

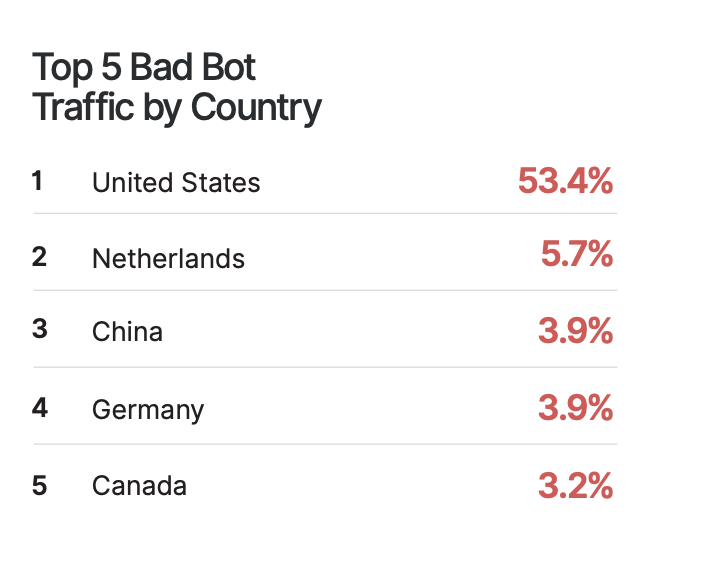

Bad bots are found all over the world. US ranks the highest in bad bot trafficking.

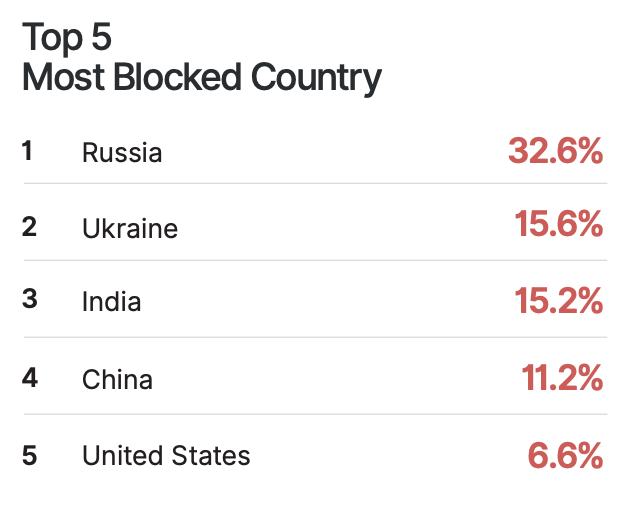

While this remains high, countries are working hard to make things secure for the people of their country. Here are top 5 countries that have succeeded in blocking bad bot traffic.

MALICIOUS ACTIVITIES OF BAD BOTS AND DETECTION

Bad bots have a wide range of use cases. They are used in most business for malicious activities. Here are a few.

1. PRICE SCRAPING:

E-commerce today is all about giving away the product for the best price. Therefore competitors need to constantly be updated on what each site charges for the product. Because the customer is likely to buy from the site that sells it for the cheapest price. Therefore competitors use bots to scrape the data. With good SEO, they can easily become leaders in the market place.

Chances are your site is affected if you experience sudden website slowdown and downtime. This is caused by aggressive scrapers. Industries that show pricing on their site such as E-commerce, Gambling, Airlines and Travel are affected by this.

2. CONTENT SCRAPING:

Content drives business for many industries. This can be their website content or the sensitive information that they have stored. Duplicating content causes damage to your SEO ranking. If your site has a unexplained downtime, check for bots, chances are high that someone is scraping your information.

Industries affected by this are Job boards, Classifieds, Marketplace, Digital Publishing, AND Real Estate Proprietary.

3. ACCOUNT TAKEOVER:

This is a common stake out for any website that has a login page. Customer’s credentials are breached and their account will be eventually taken over, This results in financial fraud, lock out and bad reputation for the company. Most of the hackers do this to get the loyalty points, credit card details and to make unauthorized purchases.

4. ACCOUNT CREATION:

This is simply done to spam and spoil the name of the company. Bots create multiple user accounts and keeps getting to the support with questions. This kills their productivity and does not help them serve people who might actually be in need. This also drops the conversion rates.

Targeted platforms are cloud services, social media, and Gambling sites.

5. CREDIT CARD FRAUD:

Criminals get the card number from one source and try to get the missing information that they may need such as the CVV, Expiry date from other sources. This damages the fraud score of your business. Customers might be too scared to do business with you. Increased chargebacks and customer complaints are one way to find this.

6. DENIAL OF SERVICE:

Abnormal and unexplained spikes in traffic on particular resources is one way to find this. What happens here is the website slows down due to the extraordinary spike in traffic ultimately leading to revenue loss.

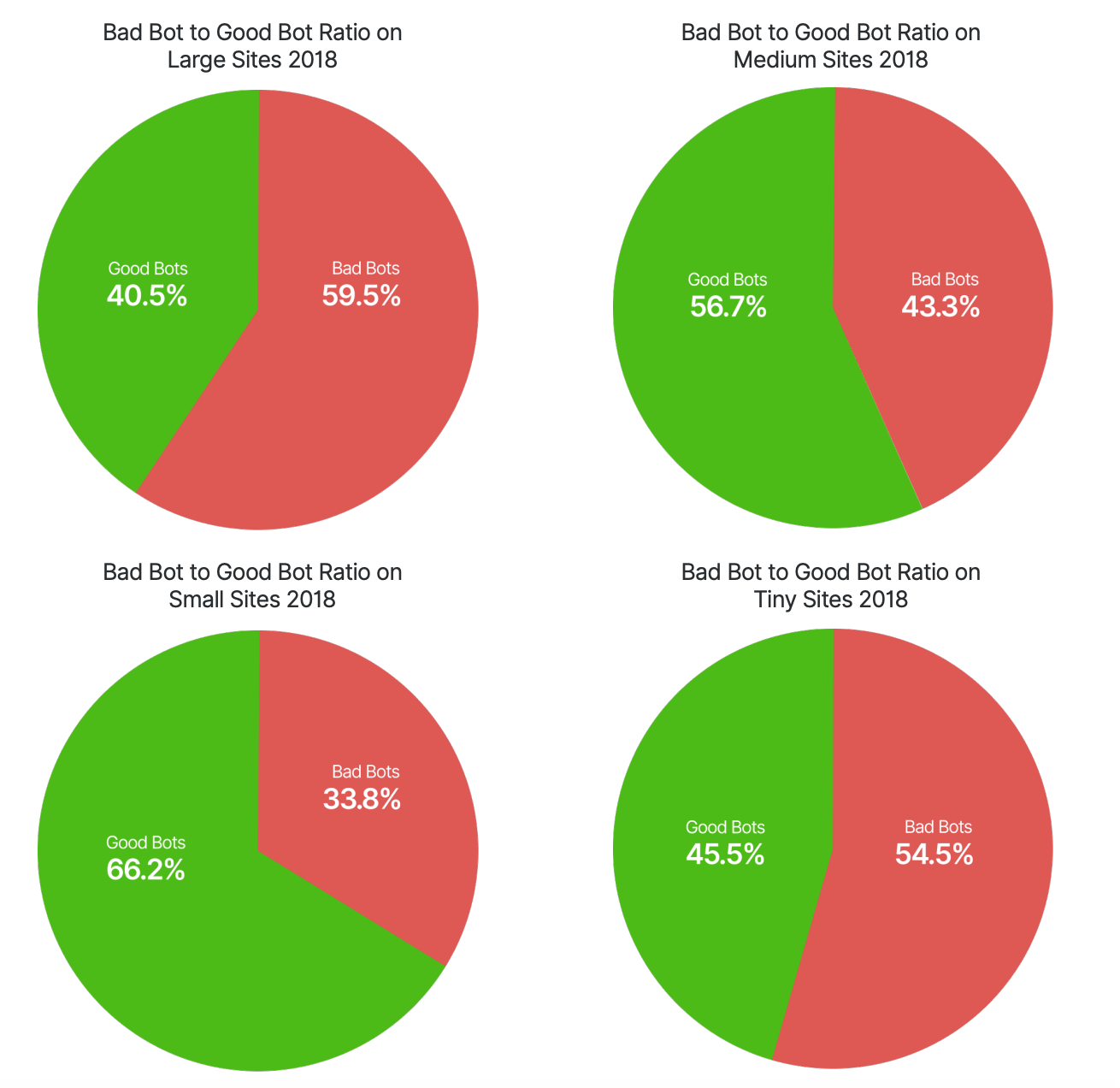

The size of your website plays a pivotal role in welcoming bad bots. Here is a chart showing the percentage of bots in the year 2018. The websites are divided into tiny, small, medium and large.

DETECTING A MALICIOUS ENTITY

Any malicious entity operating a botnet such as credential stealing, fraud will be stopped by the security solution installed in the app. Every bot trying to access the internet will have an IP address associated with it. When the bot tries to perform a malicious activity, this IP address will be blacklisted. There are sites that get the entire list of blacklisted IP address and block them from their site as a precautionary step.

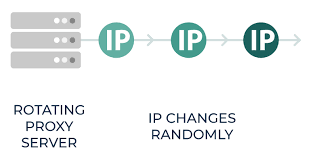

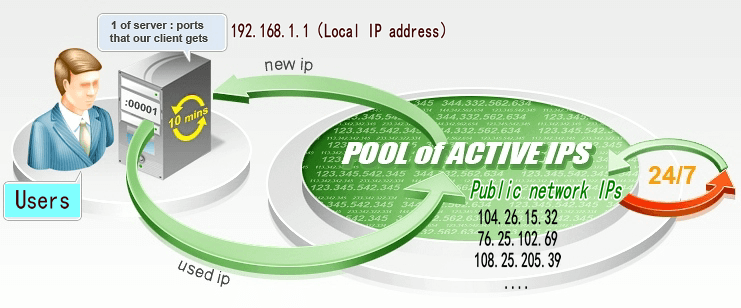

There are instances where the attacker uses a rotating IP address. When you rotate a bunch of IP address, they randomly pick an address and request for the web page.

Source: Smart Proxy

If it succeeds then the page is displayed. If it is banned then another IP is picked from the bundle. Managing this manually requires a lot of effort. But, if you install a Scrapy rotating proxy then you can automate this effort.

Source: best paid proxies

Let us now see how to detect the bot activity using private proxy servers. We are using the tool from ThreatX labs to do this.

Here a customer with “proxy_ip:X.X.X.X:60000” in the “User-Agent” header suddenly received a lot of requests. These requests suspiciously had the same header but were from different IP addresses.

GET /path/to/resource.css HTTP/1.1

User-Agent: proxy_ip:X.X.X.X:60000 Mozilla/5.0 (Macintosh;

Intel Mac OS X 10_13_6) AppleWebKit/537.36 (KHTML, like Gecko)

Chrome/70.0.3538.102 Safari/537.36

As a next step, the prevalence of User-Agents were observed and tracked. It was found that the “proxy-ip:” User-Agent was sent only when a static request was made. All such requests were pulled and checked.

$ awk -F\" '{print $6}' botnet-ip-requests | grep -oP "Mozilla.+" | sort -u

Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_6) AppleWebKit/537.36 (KHTML, like Gecko)

Chrome/70.0.3538.102 Safari/537.36

proxy_ip:X.X.X.X:60000 Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_6)

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.102 Safari/537.36

It was found that all the IP addresses were using the same browser - Mac OS X 10_13_6/Chrome/70.0.3538 User-Agent, and the only change was the IP address. Multiple customer sites were checked from the database to find the correlation between the IP address and User agent header. It was observed that about 7400 IPs were used in this.

$ wc -l ~/observed-botnet-ips

7419 observed-botnet-ips

WAF signatures were then employed to block this,

ANALYZING THE PROXY

Later, requests were sent to the entities that were involved in the attack. This resulted in the below error.

$ curl -v http://X.X.X.X:60000

< HTTP/1.1 400 Bad Request

< Server: squid

< X-Squid-Error: ERR_INVALID_URL 0

...

<h1>ERROR</h1>

<h2>The requested URL could not be retrieved</h2>

...

<p>Generated Wed, 10 Apr 2019 10:09:23 GMT by localhost (squid)</p>

<!-- ERR_INVALID_URL -->

This confirmed that the IP was from a Proxy server. The User has used a open proxy to hide the original IP. Next, the following request was sent:

$ curl --proxy http://X.X.X.X:60000 http://blog.threatx.com -v

< HTTP/1.1 407 Proxy Authentication Required

< Server: squid

< X-Squid-Error: ERR_CACHE_ACCESS_DENIED 0

< Proxy-Authenticate: Basic realm="Private"

...

<h1>ERROR</h1>

<h2>Cache Access Denied.</h2>

...

<p>Generated Wed, 10 Apr 2019 10:16:49 GMT by localhost (squid)</p>

<!-- ERR_CACHE_ACCESS_DENIED -->

This proved that a customized set if proxies were used to pull off this attack. And, that these IP addresses were concentrated in multiple networks.

$ netcat whois.cymru.com 43 < ~/observed-botnet-ips | awk -F\| '{print $3}'| sort |

uniq -c | sort -nr

1651 104.164.0.0/15

...

Then, masscan was implemented to detect neighboring proxies:

$ cat ~/proxy-network-list | while read network ; do

network_=$(echo "${network}" | sed 's/\//_/')

masscan -p60000 "${network}" -Pn \

--max-rate 10000 \

--output-format json \

--output-filename masscan-$network_.json

done

It was found that over 28000 hosts were listening on tcp/60000:

$ wc -l ~/proxy-network-live-ips

28140 proxy-network-live-ips

On experimenting it was found that all of the proxies are used together for any attack. Then, the network prefix was summarized and the count of live proxies in this network was obtained.

$ netcat whois.cymru.com 43 < ~/proxy-network-live-ips | awk -F\| '{print $3}'| sort |

uniq -c | sort -nr

5504 104.164.0.0/15

...

The proxy density was high and this confirmed that network was controlled by attackers.

Final result:

# confirmed networks (high proxy density)

Count network/CIDR Organization

4048 38.79.208.0/20 PSINet, Inc. / Cogent

4035 38.128.48.0/20 PSINet, Inc. / Cogent

2019 216.173.64.0/21 Network Layer Technologies Inc

254 166.88.170.0/24 YHSRV / EGIHosting

249 206.246.67.0/24 Nomurad SSE (C04726371) / NuNet Inc.

248 206.246.115.0/24 NuNet Inc.

248 104.171.148.0/24 DedFiberCo

247 216.198.86.0/24 CloudRoute, LLC

247 206.246.89.0/24 Expressway Agriculture (C04648072) / NuNet Inc.

247 206.246.73.0/24 INTERNETCRM, INC. (C04860575) / NuNet Inc.

247 206.246.102.0/24 ? / NuNet Inc.

247 104.171.158.0/24 DedFiberCo

246 104.237.244.0/24 DedFiberCo

246 104.171.150.0/24 DedFiberCo

244 104.171.157.0/24 DedFiberCo

# suspicious networks (low proxy density)

Count network/CIDR Organization

5504 104.164.0.0/15 EGIHosting

2741 181.177.64.0/18 My Tech BZ

1014 107.164.0.0/16 EGIHosting

1011 107.164.0.0/17 EGIHosting

505 166.88.160.0/19 EGIHosting

251 142.111.128.0/19 EGIHosting

About the author

Rachael Chapman

A Complete Gamer and a Tech Geek. Brings out all her thoughts and Love in Writing Techie Blogs.

Related Articles

11 Most Frequently Asked Web scraping questions by market researchers Answered

There are a lot of things that you can do with data scraping. 11 Most Frequently Asked Web scraping questions by market researchers Answered

Angularjs Vs Reactjs Comparision – What Are The Major Differences in 2018?

In the article below we will be comparing the two most popular technologies in the year 2018. These are very famous technologies that are widely accepted worldwide and are established for front-end web development.